F1 ML Notes

F1-ml X auto-research.

F1-ml X auto-research.

As a Mercedes F1 fan it has been a refreshing start to 2026, almost like the feeling of lockdown lifting.

We finally have decent cars and its convincing enough for me to wakeup at 6am on a Sunday to make a coffee and watch the race.

However I wanted something more to keep me obsessed with the race weekends and to keep me locked-in, I decided to try doing some ML that learns + predicts as the session + season goes on. I want to also learn more about Cloudflare's developer platform so it made sense to build the page + stuff behind https://irvyn.us/f1

To explain what it is from an architecture stand-point:

----------------------------------------------------------------------------------

crons -> hourly + 5-min + 1-min triggers on race-weekend days

workflow binding -> a step-by-step large TS file for scraping the start times of f1 sessions

durable object -> helps ^ by effectively being a durable version of the data ^ produces

buckets -> F1_PRIVATE_BUCKET + F1_PUBLIC_BUCKET (S3 buckets - called R2 in CF world)

containers -> ReplayPublisherContainer + LiveSourceBridgeContainer

----------------------------------------------------------------------------------

|

v

+-----------------------------+

| Worker |

| reconcile schedule + start |

+-------------+---------------+

|

+------------------+------------------+

| |

v v

+-----------------------------+ +-----------------------------+

| R2 | | Durable Object |

| persist plans + "overrides" | | keep active session state |

+-------------+---------------+ +-------------+---------------+

| |

+------------------+------------------+

|

v

+-----------------------------+

| Workflow |

| wait, then start "runners" |

+-------------+---------------+

|

v

+-----------------------------+

| Containers |

| run ML, write JSON, stop |

+-------------+---------------+

|

v

+-----------------------------+

| R2 |

| store published JSON and |

| let this site read it |

+-----------------------------+

Sure most of this can be done in 1 VPS with Go but with cloudflare you get the above primitives like Containers + serverless workers (which are fast and located in unique geographical locations since CF has its own data centers) on a free / cheap 5$/m tier. Which can scale to let you do some cool stuff.

So the main idea of above is

The page at /f1 is essentially static html + js + css, then as a session is live it will digest and poll the latest.json consuming the live ML predictions easily.

Obviously it is quite a trivial pattern above for a decent SWE or anyone with a LLM coding partner could setup but I wanted to do more experiments on the actual underlying ML; so naturally I did. I had been reading about this thing called Pi etc and how it can be used for ?autoresearch?

I knew the starting point should be boring.

Not a giant model, not some overfit racing oracle, just a small tabular model that could deal with a noisy weekend state and publish stable JSON for the page. The core lane was basically sklearn-style structured prediction over canonical race-weekend rows, then a projection layer on top that turns those raw outputs into something the page can actually render.

That was enough to get something live, but it also made the weak spots obvious very quickly. The Japan weekend is what really exposed it: the model could see strong McLaren practice evidence and still project them too far down because priors and post-processing were overpowering the current weekend.

Classic tabular ML stack:

winpodiumtop10So mentally it was something like:

driver/weekend snapshot

|

v

[feature row]

team=McLaren

stage=post_practice

prior_team_rating=...

practice_trimmed_pace_gap_s=...

qualifying_rank=...

top_speed_delta_kph=...

weather=...

|

v

[DictVectorizer]

turn mixed fields into model matrix

|

v

[small sklearn models]

P(win), P(podium), P(top10), expected_finish

|

v

[calibration + projection logic]

smooth probabilities

rank field

build finish intervals

|

v

[event_outlook.json]

what /f1 actually renders

The important part is how naive it was in a good way. It was not trying to learn some magical hidden representation of Formula 1. It was mostly saying:

"Given the priors, the current session evidence, and a few race-context features, what is the probability this driver wins / podiums / finishes in the top 10, and what finish position does that imply?"

That is exactly why autoresearch was useful. Once the baseline was simple enough to understand, the failure modes were also simple enough to debug. The problem was not "the model needs to be bigger". The problem was "this specific pipeline is weighting the wrong things at the wrong time."

pi-autoresearch is the loop I used to attack that problem. Zoomed out, it is not "an AI model". It is experiment infrastructure for an agent.

The useful mental model is:

pi-autoresearch is the extension/skill that turns that runtime into an edit -> benchmark -> log -> keep/discard loop.autoresearch.sh is the judge.That separation matters. I did not want to build a one-off API harness that generated patches and hoped for the best. I already had a Codex subscription, and what I actually needed was a repo-aware coding agent with shell access, git access, file editing, and enough context to keep iterating inside a real Python project. In this setup Pi handled the experiment loop and UI, while codex exec --full-auto --json acted as the worker that actually made changes and ran the repo.

In the F1 shadow workspaces I pinned pi-autoresearch to upstream commit 62feb2f46ef2a1b8e39af381b47acc4d7af42ca8, seeded the run with an autoresearch.md file describing the objective and guardrails, and let the loop work from there. Each experiment had:

autoresearch.shautoresearch.checks.shautoresearch.config.jsonautoresearch.jsonlautoresearch.state.jsonsummary.json and summary.mdThat made the whole thing much more verifiable than "I prompted a model a lot and vibes-checked the output". A run either improved the benchmark under the same contract or it did not. Kept runs survived as commits. Discarded runs were reverted but still logged. The notes in autoresearch.md acted as the seed file and memory for the next iteration.

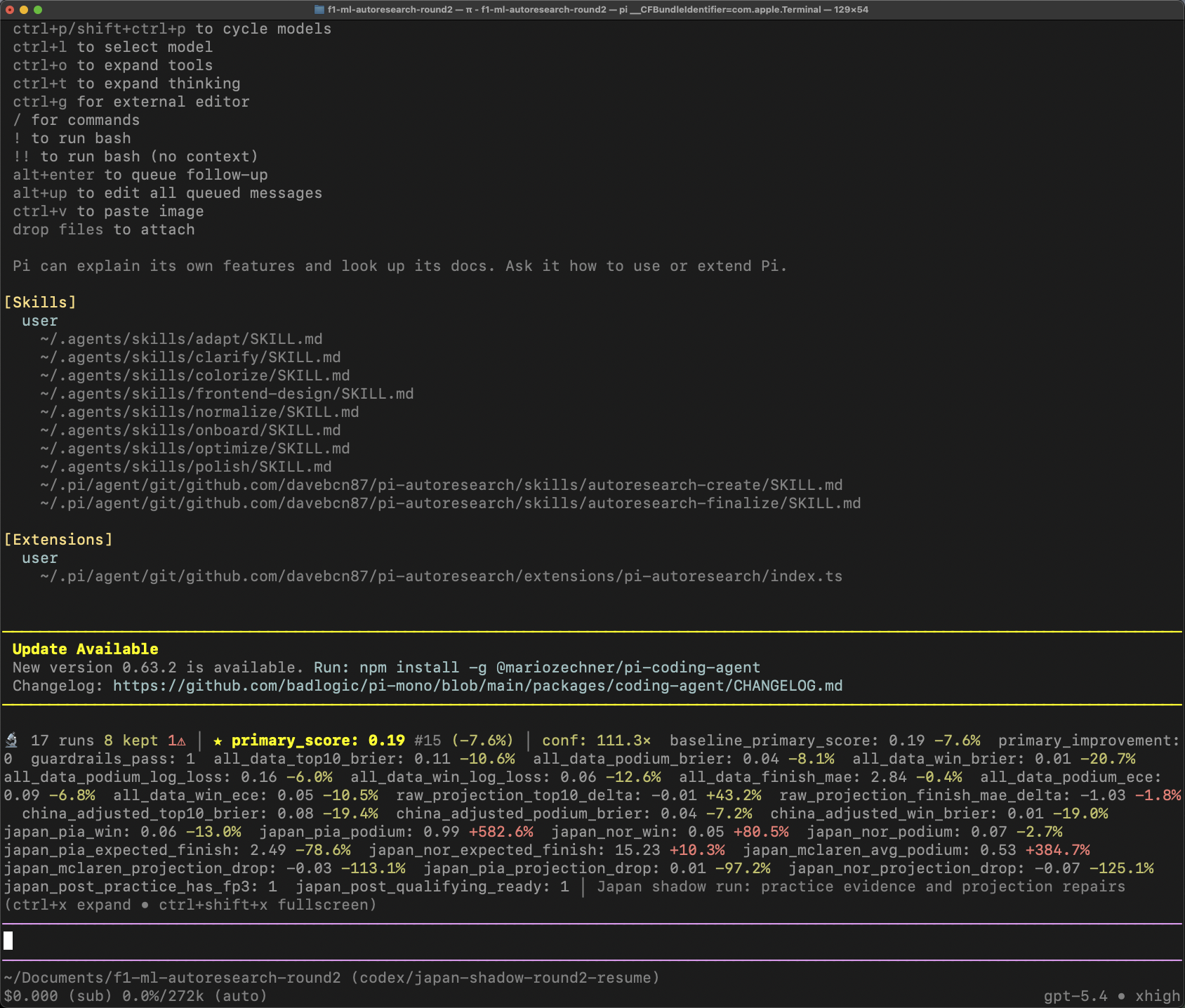

The terminal view while it was running looked like this:

The small dashboard detail I liked most is that it makes the loop legible. You can see how many runs happened, how many were kept, the current primary score, the confidence multiple, and a bunch of second-order metrics that stop you from accidentally "improving" the benchmark by breaking the thing you actually care about.

Round 1 was the direct response to the Japan post-practice failure mode: McLaren looked too weak even when the weekend evidence said the opposite.

This round taught the main lesson of the whole exercise: the bug was not "the model is too simple". The bug was that priors and projection logic were crushing current-weekend evidence.

The changes that actually mattered were:

The baseline proxy had primary_score = 0.210087 and a very bad japan_mclaren_projection_drop = +0.2526. The stable Round 1 lane got to about 0.1987 and flipped that projection drop negative. That was the actual win: stop the projection layer from making an already plausible McLaren read worse.

What I cared about enough to keep:

This was the round that produced the most obviously production-worthy behavior changes. It was not glamorous, but it made the live model less dumb.

What I explicitly did not want to keep:

That last point matters a lot. One of the discarded lanes got the raw score down to 0.19046, but it was still discarded because it made the real-world behavior worse again. That was the point of running the loop with hard side metrics instead of a single scalar and calling it done.

Round 2 started from the kept Round 1 checkpoint and asked a better question: if Round 1 fixed the obvious symptom, what is the more structural version of that fix?

The two ideas that mattered were:

The guardrail idea is very software-engineering-coded: if the raw model already says a front-runner looks strong and the practice evidence is real, the post-processing layer should only be allowed to demote that driver by a tiny amount. If the projection layer is about to do something obviously dumb, put a boundary around it.

The pace-vs-reliability split was the more ML-shaped idea. Earlier DNFs and reliability chaos were bleeding too directly into pace-sensitive priors. Round 2 separated "this car was fast" from "this weekend ended badly", which is much closer to how you would actually reason about a Formula 1 team.

Round 2 improved the guarded benchmark again to 0.194170, but it also produced a very useful warning: the shadow artifact itself was not automatically production-ready just because the benchmark improved. The Japan panel became too Piastri-heavy and still left Norris too low, so the right thing to keep was the code-level insight, not the full shadow output.

So for production I cared much more about the guardrail logic and the shape of the prior fix than I cared about promoting the exact Round 2 shadow bundle.

So the production-facing summary from both rounds is basically this:

That is also why I liked the pi-autoresearch loop for this. It was not just searching for lower numbers. It was forcing me to learn and understand what "better" actually meant, record what failed, and keep the bits that were portable back into the real f1-ml container instead of blindly shipping the best-looking shadow run. I think all future ML should be time wasted if these loops weren't explored for automatic iterative improvements.